Nvidia H100 Dedicated Servers: The Standard for LLM Training & Generative AI

Dominate the 2026 AI landscape with the world's most advanced tensor core GPU. The Nvidia H100 is not just hardware; it is the engine behind the world's most powerful Large Language Models (LLMs). Built on the Hopper architecture, our dedicated H100 servers deliver up to 9x faster AI training and 30x faster inference than previous generations, making them the critical infrastructure for organizations scaling intelligence.

Configuration Options & Deployment Flexibility

Dedicated H100 servers come in multiple configurations to match your workload and budget requirements. Our platform offers flexible CPU-to-GPU ratios, memory configurations, and NVLink topologies.

Single GPU Configuration

Ideal for: Inference-heavy workloads, model serving, experimentation

Specs: 1x H100 GPU, 256GB+ system RAM, 2x Xeon Platinum 8592+ CPU

Use Case: API endpoint serving, micro-batch inference, model testing

Network: 100Gbps RoCE or Ethernet for integration

Dual GPU Configuration (NVLink Connected)

Ideal for: Small-scale training, multi-model serving, hybrid inference/training

Specs: 2x H100 GPUs, 768GB system RAM, 4x Xeon Platinum 8592+ CPU

Performance: 2x linear scaling with NVLink 4.0 direct GPU-to-GPU communication

Use Case: Fine-tuning 7B-13B models, serving multiple models simultaneously

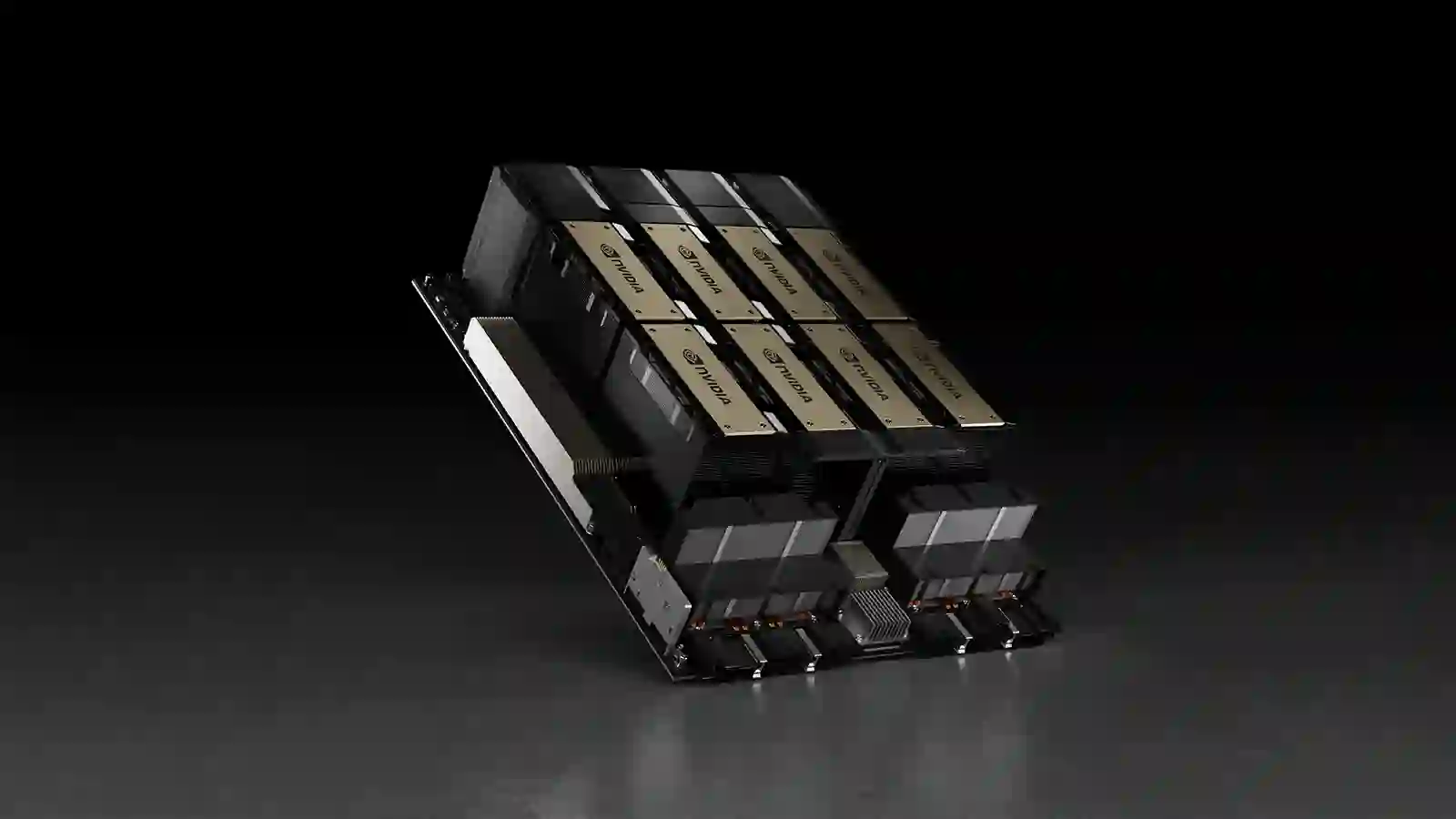

8x GPU Cluster Configuration (Full NVLink Topology)

Ideal for: Enterprise training, production inference at scale, research labs

Specs: 8x H100 GPUs, 2TB system RAM, dual-socket Xeon Platinum CPU

Performance: Full fabric scaling with <1.5% communication overhead

Network: 400Gbps Infiniband or 200Gbps Ethernet for multi-node clusters

Typical Deployment: 2-8 nodes for distributed training of 100B+ parameter models

Customization Options

Storage

NVMe SSD (14TB-28TB) or SAN/NAS integration

Networking

Mellanox InfiniBand HDR200, Nvidia BlueField SmartNIC, or standard 100Gbps Ethernet

Cooling

Liquid or air-cooled facilities in enterprise-grade data centers

Redundancy

Hot-swap GPU cards, redundant power supplies, automatic failover

Discover Your Nvidia H100 GPU Solutions

The Specs: Hopper Architecture Deep Dive

The H100 is engineered to solve the bottleneck of memory bandwidth in massive datasets.

Architecture

Nvidia Hopper (4nm)

Memory

80GB HBM3

Throughput

3.35 TB/s

AI Compute

4th-Gen Tensor

Interconnect

900 GB/s NVLink

Key Feature

MIG (7 Instances)

Best For: Massive Scale AI Workloads

Training Foundation Models

The only viable choice for training 175B+ parameter models (like GPT-4 or Llama 3 variants) from scratch

Real-Time LLM Inference

Delivers lowest latency for chatbots handling millions of concurrent tokens

Scientific Computing (HPC)

Accelerates genome sequencing, fluid dynamics, and climate modeling by 7x compared to the A100

Mixture of Experts (MoE) Models

High memory bandwidth eliminates bottlenecks in complex, sparse AI models

H100 Performance Comparison

How the H100 stacks up against the previous generation.

| Feature | Nvidia H100 | Nvidia A100 | Delta |

|---|---|---|---|

| FP8 Tensor Core | 3,958 TFLOPS | N/A | Infinite |

| FP16 Tensor Core | 1,979 TFLOPS | 312 TFLOPS | ~6x Faster |

| Memory BW | 3.35 TB/s | 2.0 TB/s | 1.6x Faster |

| LLM Training | 9x Speedup | Baseline | H100 Wins |

Server Configurations & Scalability

We offer H100s in configurations designed to maximize PCIe Gen5 and NVLink speeds.

Single Node (1x H100)

For fine-tuning mid-sized models and heavy inference

HGX H100 (4x or 8x Cluster)

Interconnected via NVLink for massive model training

Networking

Paired with dual 100GbE or InfiniBand uplinks to ensure data feeds never stall the GPU

Storage

NVMe SSD (14TB-28TB) or SAN/NAS integration

Networking

Mellanox InfiniBand HDR200, Nvidia BlueField SmartNIC

Cooling

Liquid or air-cooled facilities in enterprise-grade data centers

Redundancy

Hot-swap GPU cards, redundant power supplies

Technical FAQ: Nvidia H100

Common architectural and operational questions for deployment.

Can I use NVLink with H100 PCIe GPUs?

No. NVLink requires SXM form-factor GPUs. PCIe H100s communicate via PCIe 5.0 (128 GB/s), which is sufficient for data parallelism but insufficient for tensor parallelism. Always choose SXM for multi-GPU clusters.

What's the difference between H100 80GB and smaller variants?

The standard H100 includes 80GB HBM3 memory. Some older offers included 40GB variants, but these are discontinued. The 80GB model is the current standard across all cloud and on-premise deployments.

Can I run inference-only workloads on H100?

Yes, but it's typically not cost-effective for single-model serving. The H100 shines when serving 7+ models simultaneously (via MIG partitioning) or handling extreme throughput (1000s of requests/second). For single-model inference, the L40S is more cost-efficient.

How does H100 compare to consumer GPUs like the RTX 4090?

The H100 is 4–6x faster for LLM training and 2–3x faster for inference, but costs 8–10x more. The RTX 4090 is excellent for development and small-scale inference; H100 is required for production-scale systems.

What operating systems are supported?

Linux (Ubuntu 22.04 LTS recommended), AlmaLinux, Rocky Linux. Windows Server is available but not recommended for deep learning workloads.

Can I access these servers from my local machine?

Yes. You receive SSH access (Linux/Mac) and SSH/RDP access (Windows). We also provide Jupyter Lab access for interactive development and direct SFTP for file transfer.