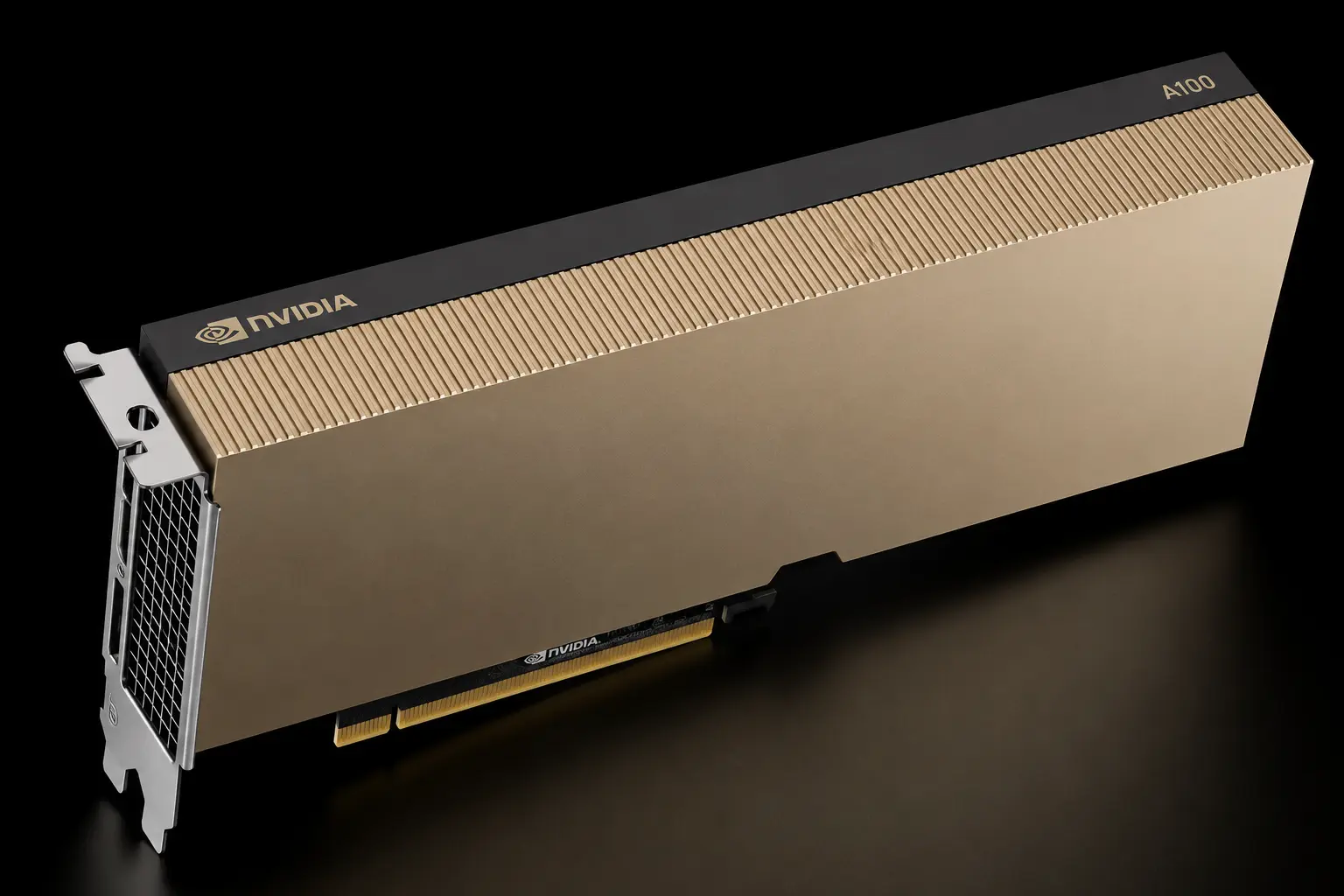

Nvidia A100 Dedicated Servers: The Standard for Production AI

The Nvidia A100 is the most deployed data center GPU in history. Powered by the Ampere architecture, it remains the backbone of global AI infrastructure in 2026. While newer chips push peak speeds, the A100 offers the perfect balance of enterprise reliability, massive VRAM, and cost efficiency — available in both 40GB and 80GB variants.

Server Configurations

We offer flexible A100 deployments in both 40GB and 80GB variants, paired with Intel Xeon Gold or AMD EPYC processors — tailored to your specific workload and budget.

PCIe Gen4 Version

Ideal for: Inference nodes, single-node training, development.

GPU Options: 1x or 2x A100 40GB / 80GB variants available.

CPU Options: Intel Xeon Gold (e.g. 6230, 6330) or AMD EPYC (e.g. 7313, 7813).

Integration: Easy to integrate into standard enterprise server chassis.

Cost: High efficiency for workloads not requiring NVLink mesh.

SXM4 (HGX A100)

Ideal for: Large model training, unified memory workloads.

GPU Options: 4x or 8x A100 80GB, fully interconnected via NVLink.

Performance: Creates a unified memory pool (up to 640GB VRAM).

Throughput: 600 GB/s GPU-to-GPU bandwidth.

CPU Options: Intel Xeon Gold 6336Y or equivalent high-core-count platforms.

Deployment Features

Storage

High-speed NVMe arrays to feed the 2TB/s memory bandwidth. SSD and NVMe RAID options available.

Networking

200Gbps InfiniBand or Ethernet options for cluster interconnects.

Uptime

Tier III/IV data centers with redundant power and cooling.

Support

24/7 Hardware replacement and driver-level support.

Discover Your Nvidia A100 Solution

The Specs: Ampere Architecture & MIG (Multi-Instance GPU)

The A100 introduced technologies that defined modern AI computing. Available in 40GB and 80GB HBM2e configurations.

Architecture

Nvidia Ampere

Memory

40GB / 80GB HBM2e

Bandwidth

1.6 / 2.0 TB/s

Partitioning

7x MIG Instances

Compute

3rd-Gen Tensor

Interconnect

600 GB/s NVLink

Best For: Production & Research

Large Scale Inference

The most cost-effective way to serve models like Llama-3 or Mixtral to thousands of concurrent users.

Fine-Tuning LLMs

With up to 80GB of memory, handles LoRA/QLoRA tasks effortlessly with batch sizes consumer cards cannot match.

High Performance Computing

The gold standard for double-precision (FP64) simulations in physics, weather forecasting, and energy sectors.

University & Research

Preferred for academia due to broad software support and lower hourly cost compared to H100s.

A100 vs. The Competition (2026)

Is the A100 still worth it? Absolutely.

| Feature | Nvidia A100 (80GB) | Nvidia H100 | RTX 4090 |

|---|---|---|---|

| Primary Use | Production Workhorse | Bleeding Edge Training | Dev / Entry AI |

| VRAM | 40GB / 80GB HBM2e | 80GB HBM3 | 24GB GDDR6X |

| Interconnect | NVLink (600 GB/s) | NVLink (900 GB/s) | None (PCIe) |

| FP8 Support | No | Yes | No |

| Cost Efficiency | High | Medium | High |

Scalability & Cluster Options

Scale from a single card to a SuperPOD architecture with our flexible A100 deployments.

Single Node (PCIe)

Perfect for inference endpoints and development environments. Available with Intel Xeon Gold or AMD EPYC CPUs.

HGX Clusters (SXM4)

4-GPU and 8-GPU Delta/Redstone baseboards for large model training with NVLink mesh.

Networking

ConnectX-6 SmartNICs providing up to 200Gb/s throughput per card.

Storage

Local NVMe RAID or Network Storage

OS Support

Ubuntu, Rocky Linux, Windows Server

Environment

Docker, Kubernetes, Slurm

Power

N+1 Redundant Power Supplies

Global Data Center Locations

Deploy your A100 server in the region closest to your users or compliance requirements.

Tokyo, Japan

Asia-Pacific hub. Low latency for East Asia.

London, UK

Western Europe & GDPR-friendly deployments.

Los Angeles, USA

West Coast US & Pacific Rim coverage.

Almere, Netherlands

Central Europe with excellent EU connectivity.

Technical FAQ: Nvidia A100

Common questions regarding A100 configuration and capabilities.

Should I choose the 40GB or 80GB version?

For modern LLMs, we highly recommend the 80GB version. The extra memory lets you fit larger models and use bigger batch sizes, significantly speeding up processing. The 40GB version is best suited for HPC workloads, smaller inference tasks, or budget-conscious deployments where full model VRAM isn't required.

Can I train GPT-level models on A100s?

Yes. While the H100 is faster, the A100 is fully capable of training massive models. In fact, most foundational models from 2020–2024 (including the original GPT-3) were trained on A100 clusters.

Does the A100 support Ray Tracing?

No. The A100 is a pure compute card ("Headless"). It has no RT cores or display outputs. For rendering or graphics workloads, please see our L40S or RTX 4090 pages.

What is Multi-Instance GPU (MIG)?

MIG allows you to partition a single A100 GPU into up to seven isolated instances, each with its own dedicated memory, cache, and compute cores. This is ideal for maximizing utilization when running multiple smaller inference jobs simultaneously.

Do you support HGX A100 clusters?

Yes, we offer HGX A100 configurations (4-GPU and 8-GPU) connected via NVLink, allowing the GPUs to function as a single massive accelerator for training large models.

Is root access provided?

Yes, all dedicated server rentals include full root SSH access, letting you install any custom drivers, Docker containers, or ML frameworks (PyTorch, TensorFlow, JAX) you require.

Which CPU options are available with A100 servers?

Our A100 servers are available with both Intel Xeon Gold (e.g. 6230, 6326, 6336Y) and AMD EPYC (e.g. 7313, 7813) processors, depending on location and configuration. Both platforms provide excellent PCIe Gen4 bandwidth for GPU workloads.

Which locations are available for A100 servers?

A100 dedicated servers are currently available in Tokyo (Japan), London (UK), Los Angeles (USA), and Almere (Netherlands). Availability of specific configurations may vary by location.